A love letter to tools that changed everything for me.

Programming over the years

Programming got vastly more varied compared to when I started dabbling in AmigaBASIC in the mid-1990s. Back then you could buy one very big book about the computer you’re programming and were 99% there. That book, full with earmarks and Post-its, lay next to you while hacking into your monochrome editor, always in reach.

Nowadays the book on your frontend web framework can be thicker than what a C64 programmer needed to write a complete game. On the other hand, the information for everything that we need to write code today is usually no more than one click away.

Nobody can imagine paying for developer documentation anymore – both Microsoft and Apple offer their documentation on the web for free for everyone. And don’t get me even started about open-source projects!

In times of npm, PyPI, and GitHub, it’s hard to explain that requiring anything beyond what your operating system offers used to be a controversial decision that had to be weighted judiciously. Often, you shipped your dependencies along with your product1.

The new availability is great and variety is healthy, but it leads to fragmentation of the information that you need to be productive.

People have dozens of tabs open2 with documentation for the packages they’re using at the moment, hectically switching between them to find the right one. As someone who has worked from the best longboarding spot in the world where several 10,000s of people share a single POP, I can tell you that online-only docs aren’t only a problem when your Internet dies altogether. Especially an online search function with flaky Internet is worse than no search function.

If you’re like me and are a polyglot working with multiple programming languages with enormous sub-communities each (even within Python, Flask + SQLAlchemy + Postgres is a very different beast from writing asyncio-based network servers), it hurts my head to even imagine one could remember the arguments of every method I’m using. Primarily, if you’re really like me and barely remember your own phone number.

That’s why it was such a life-changing event for me when I found Dash in 2012.

API documentation browsers

Your mind is for having ideas, not holding them.

— David Allen

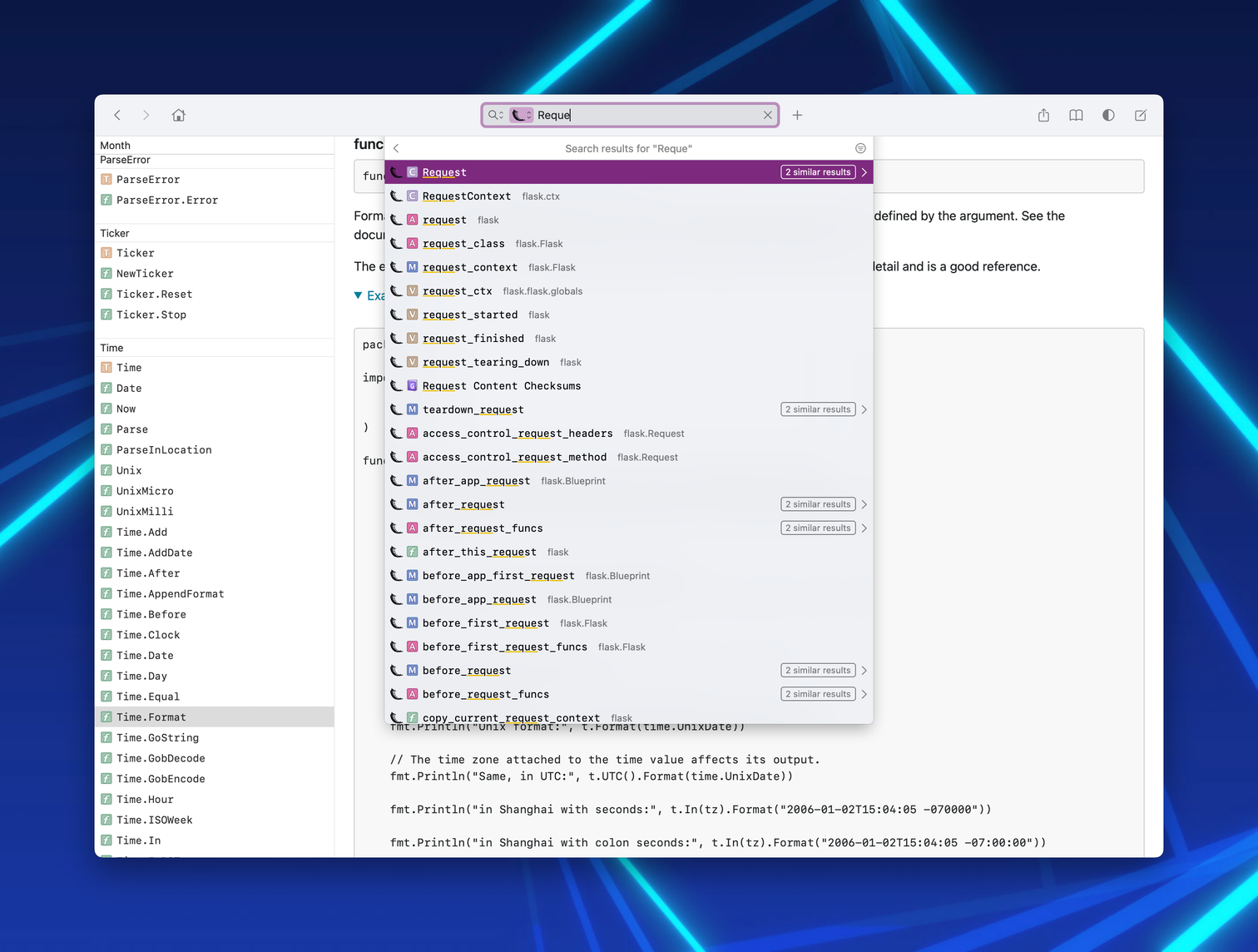

Dash searching

Dash gives me the superpower of having all relevant APIs one key press away:

- I press ⌥Space and a floating window pops up with an activated search bar,

- I start typing the rough name of the API or topic,

- I choose from the suggestions and land on the symbol within the official project documentation,

- I press Escape, the floating window disappears and I can start typing code immediately because my editor is in focus again.

- If I forget what I just read, I press ⌥Space again and the window pops up at the same position.

All this is blazing fast – I want to make such a round trip in under 2 seconds. It has to be that quick, so my brain doesn’t lose track of what I was doing. At this point, I can do it subconsciously. It’s the forgotten bliss of native applications – yes I know about https://devdocs.io.

Having all API docs one key press away is profoundly empowering.

The less energy I spend trying to remember the argument of a function or the import path of a class, the more energy I can spend on thinking about the problem I’m solving.

The rise of generative AI like GitHub Copilot doesn’t change that. While you have to write less code, the importance of understanding code – and hence APIs – is growing.

Even if you’re lucky enough to have early access to GitHub Copilot for Docs or pay for advanced ChatGPT models: They are great at explaining things but way too slow for continuous, quick reference.

While Dash is a $15 / year Mac app, there’s the free Windows and Linux version called Zeal, and a $20 Windows app called Velocity. Of course, there’s also at least one Emacs package doing the same thing: helm-dash.

Meaning: you can have this API bliss on any platform! In the following, I’ll only write about Dash, because that’s what I’m using, but unless noted otherwise, it applies to all of them.

The one thing they have in common is the format of the local documentation.

Documentation Sets

They all use Apple’s Documentation Set Bundles (docsets) which are directories with the HTML documentation, metadata in an XML-based property list, and a search index in a SQLite database:

some.docset

└── Contents

├── Info.plist # ← metadata

└── Resources

├── Documents # ← root dir of HTML docs

│ └── index.html

└── docSet.dsidx # ← SQLite db w/ search index

If you have a bunch of HTML files on your disk, you can convert them into a docset that can be consumed by Dash. It’s just HTML files with metadata. And since it’s HTML files on your disk, all this works offline.

Therefore, docsets can replace documentation that you already keep locally on your computer for faster and/or offline access without doing anything special. Just package it up into the necessary directory structure, add an empty index, and fill out simple metadata.

Shazam! Now you can conjure them with a single keypress and get rid of them with another.

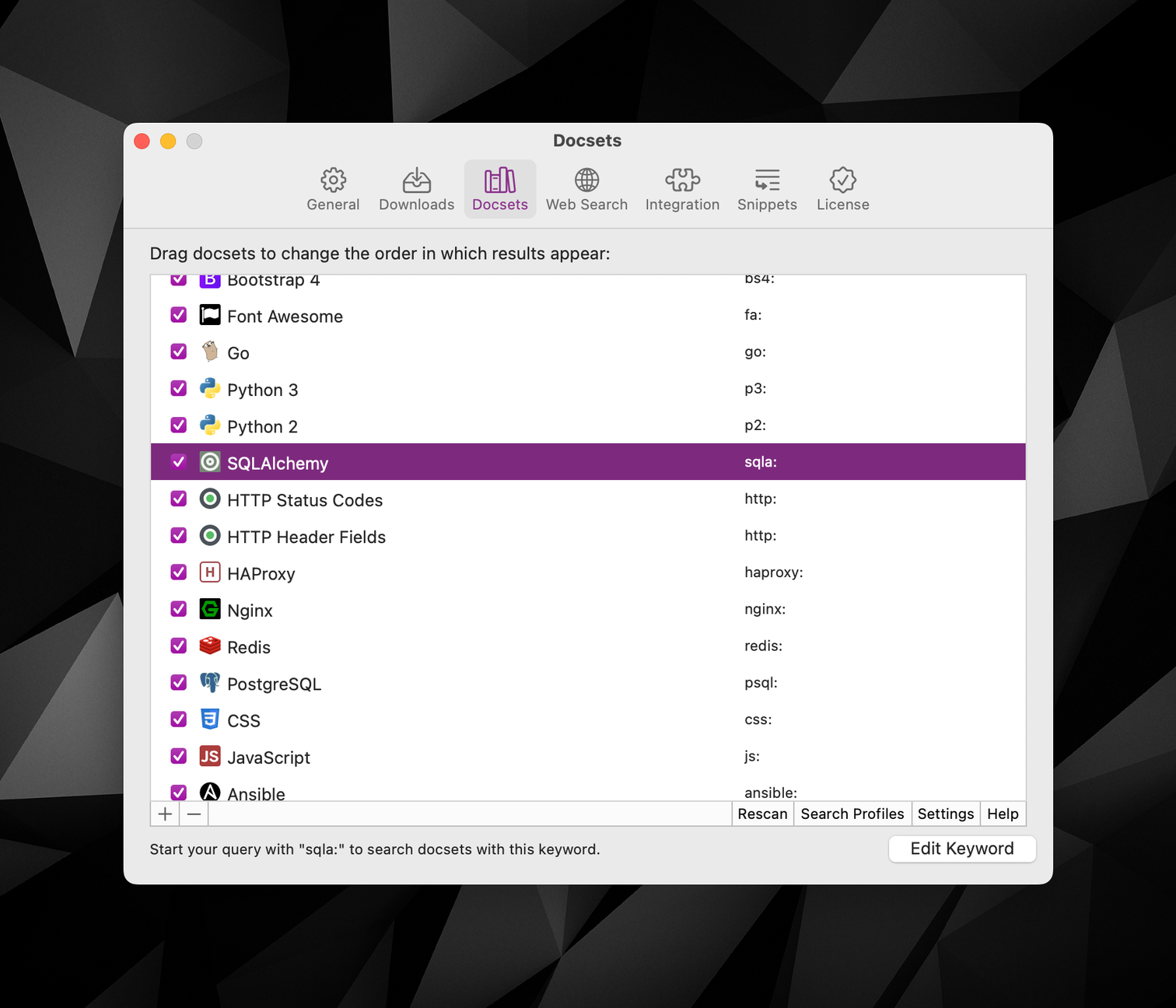

Let’s circle back to the boring history lesson from the beginning: there’s a myriad of projects that I use across countless platforms – every day. And I’m not talking just about programming APIs here: Ansible roles, CSS classes, HAProxy configuration, Postgres (and SQL!) peculiarities … it’s a lot.

Installed Dash docsets

And while Python and Go core documentation ship with Dash, and while Godoc documentation can be added directly by URL3, no matter how hard Dash will try: in the fragmented world of modern software development, it will never be able to deliver everything I need.

Sphinx

The biggest gap for me is Sphinx-based docs that dominate (not only) the Python ecosystem.

Sphinx is a language-agnostic framework to write documentation. Not just API docs or just narrative docs: all of it, with rich interlinking. It used to be infamous for forcing reStructuredText on its users, but nowadays more and more projects use the wonderful MyST package to do it in Markdown. If you have any preconceptions about the look of Sphinx documentation, I urge you to visit the Sphinx Themes Gallery and see how pretty your docs can be. It’s written in Python, but it’s used widely, including the Linux kernel, Apple’s Swift, the LLVM (Clang!) project, or wildly popular PHP projects.

And it offers the exact missing piece: An index for API entries, sections, glossary terms, configuration options, command line arguments, and more – all distributed throughout your documentation any way you like, but always mutually linkable. I find this wonderful particularly if you follow a systematic framework like Diátaxis.

The key component that makes this possible is technically just an extension: intersphinx. Originally made for inter-project linking (hence the name) it offers a machine-readable index for us to take. That index grew so popular that it’s now supported by the MkDocs extension mkdocstrings and pydoctor. You can recognize intersphinx-compatible documentation exactly by that index file: objects.inv.

And that’s why, 10 years ago almost to the day, I started the doc2dash project.

doc2dash

doc2dash is a command line tool that you can get from my Homebrew tap, download one of the pre-built binaries for Linux, macOS, and Windows from its release page, or install from PyPI.

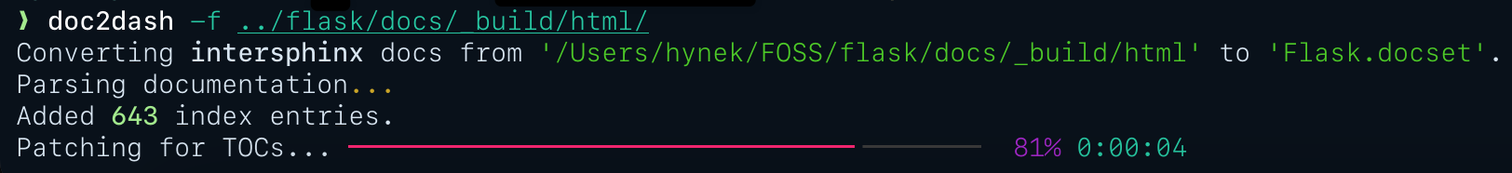

Then, all you have to do is to point it at a directory with intersphinx-compatible documentation and it will do everything necessary to give you a docset.

doc2dash converting

Please note that the name is doc2dash and not sphinx2dash. It was always meant as a framework for writing high-quality converters, the first ones being Sphinx and pydoctor. That hope sadly didn’t work out, because – understandably – every community wanted to use their own language and tools.

Those tools usually look quite one-off to me though, so I’d like to re-emphasize that I would love to work with others adding support for other documentation formats. Don’t reinvent the wheel, the framework is all there! It’s just a bunch of lines of code! You don’t even have to share your parser with me and the world.

The fact that both Dash and doc2dash have existed well over a decade and I still see friends have a bazillion tabs with API docs open has been positively heartbreaking for me. I keep showing people Dash in action and they keep saying it’s cool and put it on their someday list. Barring another nudge, someday never comes.

While the fruit-fly part of this article ends here, let me try to give you that nudge with a step-by-step how-to guide such that today becomes someday!

How to convert and submit your docs

The goal of this how-to is to teach you how to convert intersphinx-compatible documentation to a docset and how to submit it to Dash’s user-generated docset registry, such that others don’t have to duplicate your work.

I’ll assume you have picked and installed your API browser of choice. It doesn’t matter which one you use, but this how-to guide uses Dash. For optionally submitting the docset at the end, you’ll also need a basic understanding of GitHub and its pull request workflow.

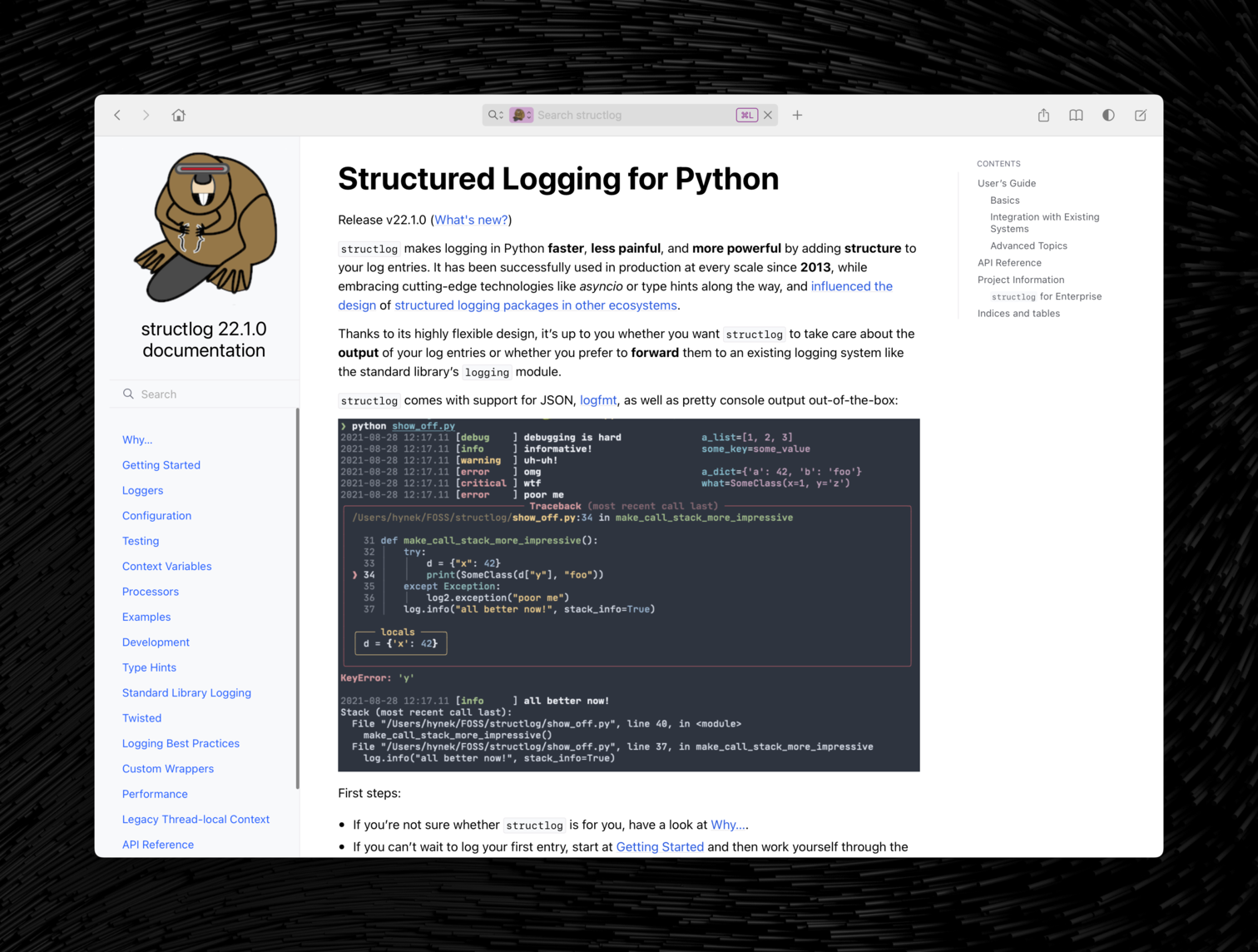

I will be using this how-to as an occasion to finally start publishing docsets of my own projects, starting with structlog. I suggest you pick an intersphinx-compatible project that isn’t supported by Dash yet and whose documentation’s tab you visit most often.

Let’s do this!

Getting doc2dash

If you’re already using Homebrew, the easiest way to get doc2dash is to use my tap:

$ brew install hynek/tap/doc2dash

There are pre-built bottles for Linux on 64-bit Intel, and macOS on both Intel and Apple silicon – therefore the installation should be very fast.

Unless you know your way around Python packaging, the next best way is pre-built binaries from the release page. Currently, it offers binaries for Linux, Windows, and macOS – all on 64-bit Intel. I hope to offer more in the future if this proves to be popular.

Finally, you can get it from PyPI. I strongly recommend using uv and the easiest way to run doc2dash with it is:

$ uvx doc2dash --help

Building documentation

Next comes the biggest problem and source of frequent feature requests for doc2dash: you need the documentation in a complete, built form. Usually, that means that you have to download the repository and figure out how to build the docs before even installing doc2dash because most documentation sites unfortunately don’t offer a download of the whole thing4.

My heuristic is to look for a tox.ini or noxfile.py first and see if it builds the documentation. If it doesn’t, I look for a .readthedocs.yaml, and if even that lets me down, I’m on the lookout for files named like docs-requirements.txt or optional installation targets like docs. My final hope is to go through pages of YAML and inspect CI configurations.

Once you’ve managed to install all dependencies, it’s usually just a matter of make html in the documentation directory.

After figuring this out, you should have a directory called _build/html for Sphinx or site for MkDocs.

Please note with MkDocs that if the project doesn’t use the mkdocstrings extension – which alas, right now is virtually all of the popular ones – there won’t be an objects.inv file and therefore no API data to be consumed.

I truly hope that more MkDocs-based projects add support for mkdocstrings in the future! As with Sphinx, it’s language-agnostic.

Converting

Following the hardest step comes the easiest one: converting the documentation we’ve just built into a docset.

All you have to do is point doc2dash at the directory with HTML documentation and wait:

$ doc2dash _build/html

That’s all!

doc2dash knows how to extract the name from the intersphinx index and uses it by default (you can override it with --name). You should be able to add this docset to an API browser of your choice and everything should work.

If you pass --add-to-dash or -a, the final docset is automatically added to Dash when it’s done. If you pass --add-to-global or -A, it moves the finished docset to a global directory (~/Library/Application Support/doc2dash/DocSets) and adds it from there. I rarely run doc2dash without -A when creating docsets for myself.

Improving your Documentation Set

Dash’s documentation has a bunch of recommendations on how you can improve the docset that we built in the previous step. It’s important to note that the next five steps are strictly optional and more often than not, I skip them because I’m lazy.

But in this case, I want to submit the docset to Dash’s user-contributed registry, so let’s go the full distance!

Set the main page

With Dash, you can always search all installed docsets, but sometimes you want to limit the scope of search. For example, when I type p: (the colon is significant), Dash switches to only searching the latest Python docset. Before you start typing, it offers you a menu underneath the search box whose first item is “Main Page”.

When converting structlog docs, this main page is the index that can be useful, but usually not what I want. When I got to the main page, I want to browse the narrative documentation.

The doc2dash option to set the main page is --index-page or -I and takes the file name of the page you want to use, relative to the documentation root.

Confusingly, the file name of the index is genindex.html and the file name of the main page is the HTML-typical index.html. Therefore, we’ll add --index-page index.html to the command line.

Add an icon

Documentation sets can have icons that are shown throughout Dash next to the docsets’s names and symbols. That’s pretty but also helpful to recognize docsets faster and if you’re searching across multiple docsets, where a symbol is coming from.

structlog has a cute beaver logo, so let’s use ImageMagick to resize the logo to 16x16 pixels:

$ magick \

docs/_static/structlog_logo_transparent.png \

-resize 16x16 \

docs/_static/docset-icon.png

Now we can add it to the docset using the --icon docset-icon.png option.

Support online redirection

Offline docs are awesome, but sometimes it can be useful to jump to the online version of the documentation page you’re reading right now. A common reason is to peruse a newer or older version.

Dash has the menu item “Open Online Page ⇧⌘B” for that, but it needs to know the base URL of the documentation. You can set that using --online-redirect-url or -u.

For Python packages on Read the Docs you can pick between the stable (last VCS tag) or latest (current main branch).

I think latest makes more sense, if you leave the comfort of offline documentation, thus I’ll add:

--online-redirect-url https://www.structlog.org/en/latest/

Putting it all together

We’re done! Let’s run the whole command line and see how it looks in Dash:

$ doc2dash \

--index-page index.html \

--icon docs/_static/docset-icon.png \

--online-redirect-url https://www.structlog.org/en/latest/ \

docs/_build/html

Converting intersphinx docs from '/Users/hynek/FOSS/structlog/docs/_build/html' to 'structlog.docset'.

Parsing documentation...

Added 238 index entries.

Patching for TOCs... ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 100% 0:00:00

Wonderful:

structlog’s Main Page

Notice the icon in the search bar and pressing ⇧⌘B on any page with any anchor takes me to the same place in the latest version of the online docs.

Automation

Since I want to create a new version of the docsets for every new release, the creation needs to be automated. structlog is already using GitHub Actions as CI, so it makes sense to use it for building the docset too.

For local testing, I’ll take advantage of doc2dash being a Python project and use a tox environment that reuses the dependencies that I use when testing documentation itself.

The environment installs structlog[docs] – i.e. the package with optional docs dependencies, plus doc2dash. Then it runs commands in order:

[testenv:docset]

extras = docs

deps = doc2dash

allowlist_externals =

rm

cp

tar

commands =

rm -rf structlog.docset docs/_build

sphinx-build -n -T -W -b html -d {envtmpdir}/doctrees docs docs/_build/html

doc2dash --index-page index.html --icon docs/_static/docset-icon.png --icon-2x docs/_static/docset-icon@2x.png --online-redirect-url https://www.structlog.org/en/latest/ docs/_build/html

tar --exclude='.DS_Store' -cvzf structlog.tgz structlog.docset

Now I can build a docset just by calling tox -e docset.

Doing that in CI is trivial, but entails tons of boilerplate, so I’ll just link to the workflow. Note the upload-artifact action at the end that allows me to download the built docsets from the run summaries5.

At this point, we have a great docset that’s built automatically. Time to share it with the world!

Submitting

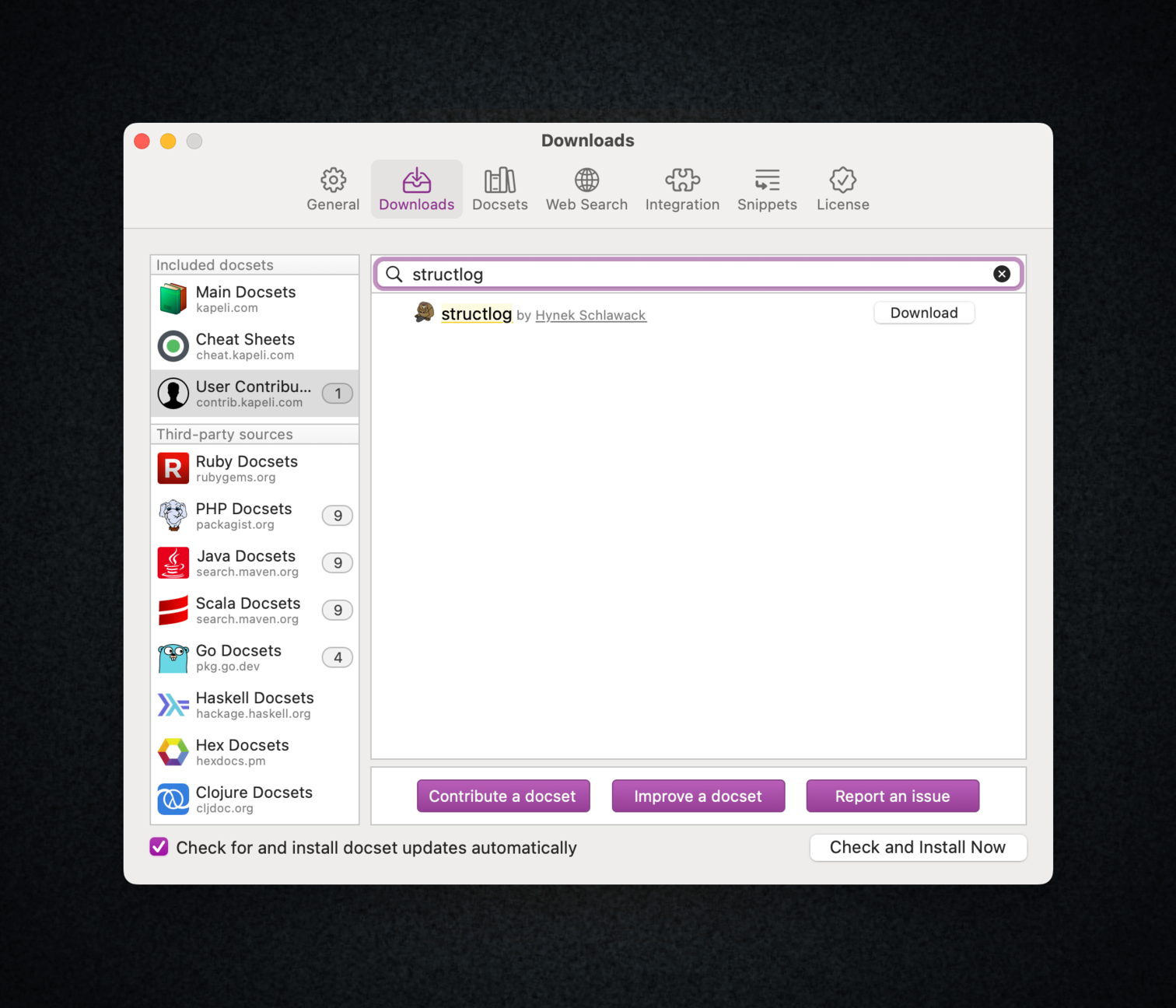

In the final step, we’ll submit our docset to Dash’s user-contributed repository, so other people can download it comfortably from Dash’s GUI. Conveniently, Dash uses a concept for the whole process that’s probably familiar to every open-source aficionado6: GitHub pull requests.

The first step is checking the Docset Contribution Checklist. Fortunately we – or in some cases: doc2dash – have already taken care of everything!

So let’s move right along, and fork the https://github.com/Kapeli/Dash-User-Contributions repo and clone it to your computer.

First, you have to copy the Sample_Docset directory into docsets and rename it while doing so. Thus, the command line is for me:

$ cp -a Sample_Docset docsets/structlog

Let’s enter the directory with cd docsets/structlog and take it from there further.

The main step is adding the docset itself – but as a gzipped Tar file. The contribution guide even gives us the template for creating it. In my case the command line is:

$ tar --exclude='.DS_Store' -cvzf structlog.tgz structlog.docset

You may have noticed that I’ve already done the Tar-ing in my tox file, so I just have to copy it over:

$ cp ~/FOSS/structlog/structlog.tgz .

It also wants the icons additionally to what is in the docset, so I copy them from the docset:

$ cp ~/FOSS/structlog/structlog.docset/icon* .

Next, it would like us to fill in metadata in the docset.html file which is straightforward in my case:

{

"name": "structlog",

"version": "22.1.0",

"archive": "structlog.tgz",

"author": {

"name": "Hynek Schlawack",

"link": "https://github.com/hynek"

},

"aliases": []

}

Finally, it wants us to write some documentation about who we are and how to build the docset. After looking at other examples, I’ve settled on the following:

# structlog

<https://www.structlog.org/>

Maintained by [Hynek Schlawack](https://github.com/hynek/).

## Building the Docset

### Requirements

- Python 3.10

- [*tox*](https://tox.wiki/)

### Building

1. Clone the [*structlog* repository](https://github.com/hynek/structlog).

2. Check out the tag you want to build.

3. `tox -e docset` will build the documentation and convert it into `structlog.docset` in one step.

The tox trick is paying off – I don’t have to explain Python packaging to anyone!

Don’t forget to delete stuff from the sample docset that we don’t use:

$ rm -r versions Sample_Docset.tgz

We’re done! Let’s check in our changes:

$ git checkout -b structlog

$ git add docsets/structlog

$ git commit -m "Add structlog docset"

[structlog 33478f9] Add structlog docset

5 files changed, 30 insertions(+)

create mode 100644 docsets/structlog/README.md

create mode 100644 docsets/structlog/docset.json

create mode 100644 docsets/structlog/icon.png

create mode 100644 docsets/structlog/icon@2x.png

create mode 100644 docsets/structlog/structlog.tgz

$ git push -u

Looking good – time for a pull request!

A few hours later:

Our contributed structlog docset inside Dash!

Big success: everyone can download the structlog Documentation Set now which concludes our little how-to!

Closing

I hope I have both piqued your interest in API documentation browsers and demystified the creation of your own documentation sets. My goal is to turbocharge programmers who – like me – are overwhelmed by all the packages they have to keep in mind while getting stuff done.

My biggest hope, though, is that this article inspires someone to help me add more formats to doc2dash, such that even more programmers get to enjoy the bliss of API documentation at their fingertips.

Another hope I’ve developed after publishing this article is that Zeal gets revitalized. Reportedly, it’s a bit long on the tooth with the last release dating back four years. Since it’s an open-source project, it would be cool if it could get a few new hands and we could have good API browsers on all platforms.

I’ve done a terrible job at promoting doc2dash in the past decade and I hope the next ten years will go better!

Since I’ve been accused of this article being an ad: I have no business relationship with the author of Dash and he didn’t know I’m writing this article until I showed him a late draft for fact-checking.

Sometimes people like products that cost money and it should be possible to write about them if they’ve been transformative to one’s professional life. Especially, if it’s coming from an indie developer.

Which is exactly what Electron has brought back for better and for worse. ↩︎

Hot take: if you need extensions to manage your tabs, you’re poorly imitating bookmarks while wasting RAM. ↩︎

Although there is an argument to be had about how well the separation of narrative documentation and API docs works. While it’s great to have a simple, standardized format for APIs, most complex packages need narrative documentation that is entirely isolated. ↩︎

I’ve been thinking about writing a client-side Read the Docs client that interprets

.readthedocs.yamlfiles and builds the documentation itself, but there’s simply too much on my plate right now. You can have this idea and become a hero. ↩︎Since I’m building a docset for a version from the past, I had to cheat a bit: once I check out the

22.1.0tag, thedocsetenvironment is gone fromtox.iniand the icons don’t exist indocs/_static, too. Therefore, I had to run the steps by hand and keep copies of the icons outside the repo. ↩︎If not: this is a great opportunity to practice in a low-stakes environment. ↩︎