Codecov’s unreliability breaking CI on my open source projects has been a constant source of frustration for me for years. I have found a way to enforce coverage over a whole GitHub Actions build matrix that doesn’t rely on third-party services.

2024-09-03 Another public holiday, another curveball. This time they decided to add opt-out filtering of hidden files (like .coverage.*!) to actions/upload-artifact 4.4.0 (yes, a minor release, SemVer still won’t save us). And broke everyone’s CI who relies on this method.

You now need to add include-hidden-files: true to your upload step. I’ve updated the examples below accordingly.

Warning: In December 2023, GitHub rolled out version 4 of their actions/upload-artifact and actions/download-artifact actions.

Version 4 is not compatible with the approach that this article used before I updated it on 2024-01-02. You either need to keep those actions pinned to v3 or adapt your workflows to the new approach. The rest of this text box is only relevant if you have the old version in place and want to upgrade to version 4. Otherwise, skip the next paragraph.

Fortunately after some complaining, GitHub added tools to make it straight-forward: Add every dimension of your build matrix to the artifact name (for example, name: coverage-data-${{ matrix.python-version }}) when uploading and replace name: coverage-data by pattern: coverage-data-* and add merge-multiple: true when downloading.

Coverage.py had an option to fail the call to coverage report for a while: either the setting fail_under=XXX or the command line option --fail-under=XXX, where XXX can be whatever percentage you like. The only reason why I’ve traditionally used Codecov (including in my Python in GitHub Actions guide), is because I need to measure coverage over multiple Python versions that run in different containers. Therefore I can’t just run coverage combine, I need to store them somewhere between the various build matrix items.

Unfortunately, Codecov has grown very flaky. I have lost any confidence in the fact when it fails a build and my first reaction is always to restart the build and only then investigate. Sometimes the upload fails, sometimes Codecov fails to report its status back to GitHub, sometimes it can’t find the build, and sometimes it reports an outdated status. What a waste of computing power. What a waste of my time, clicking through their web application, seeing everything green, yet the build is failing due to missing coverage.

When I complained about this once again and even sketched out my idea how it could work, I’ve been told that the cookiecutter-hypermodern-python project has already been doing it1 and there’s a GitHub Action taking the same approach!

So I removed Codecov from all my projects and it’s glorious! Not only did I get rid of a flaky dependency, it also simplified my workflow. The interesting parts are the following:

After running the tests under coverage in --parallel mode, upload the coverage files as artifacts:

- name: Upload coverage data

uses: actions/upload-artifact@v4

with:

name: coverage-data-${{ matrix.python-version }}

path: .coverage.*

include-hidden-files: true

if-no-files-found: ignore

You need this for every item in your build matrix whose coverage you want to take into account. As of upload-artifact v4, you have to add every dimension of your matrix to the name. This example assumes that your matrix only consists of Python versions which is true for most Python projects.

I use if-no-files-found: ignore, because I don’t run all Python versions under coverage. It’s much slower and I don’t need every Python version to ensure 100% coverage.

After all tests passed, add a new job:

coverage:

name: Combine & check coverage

if: always()

needs: tests

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

# Use latest Python, so it understands all syntax.

python-version: "3.12"

- uses: actions/download-artifact@v4

with:

pattern: coverage-data-*

merge-multiple: true

- name: Combine coverage & fail if it's <100%

run: |

python -Im pip install --upgrade coverage[toml]

python -Im coverage combine

python -Im coverage html --skip-covered --skip-empty

# Report and write to summary.

python -Im coverage report --format=markdown >> $GITHUB_STEP_SUMMARY

# Report again and fail if under 100%.

python -Im coverage report --fail-under=100

- name: Upload HTML report if check failed

uses: actions/upload-artifact@v4

with:

name: html-report

path: htmlcov

if: ${{ failure() }}

It sets needs: tests to ensure all tests are done, but it also sets if: always() such that the coverage is measured even if one of the tests jobs fails. If your job that runs tests has a different name, you will have to adapt this. Check out the full workflow if you’re unsure where exactly put the snippets.

In a nutshell:

- It downloads the coverage data that the tests uploaded as artifacts,

- combines it,

- creates an HTML report,

- creates a Markdown report that is added to the job summary,

- and finally checks if coverage is 100% – failing the job if it is not. If – and only if! – this steps fails (presumably due to a lack of coverage), it also uploads the HTML report as an artifact.

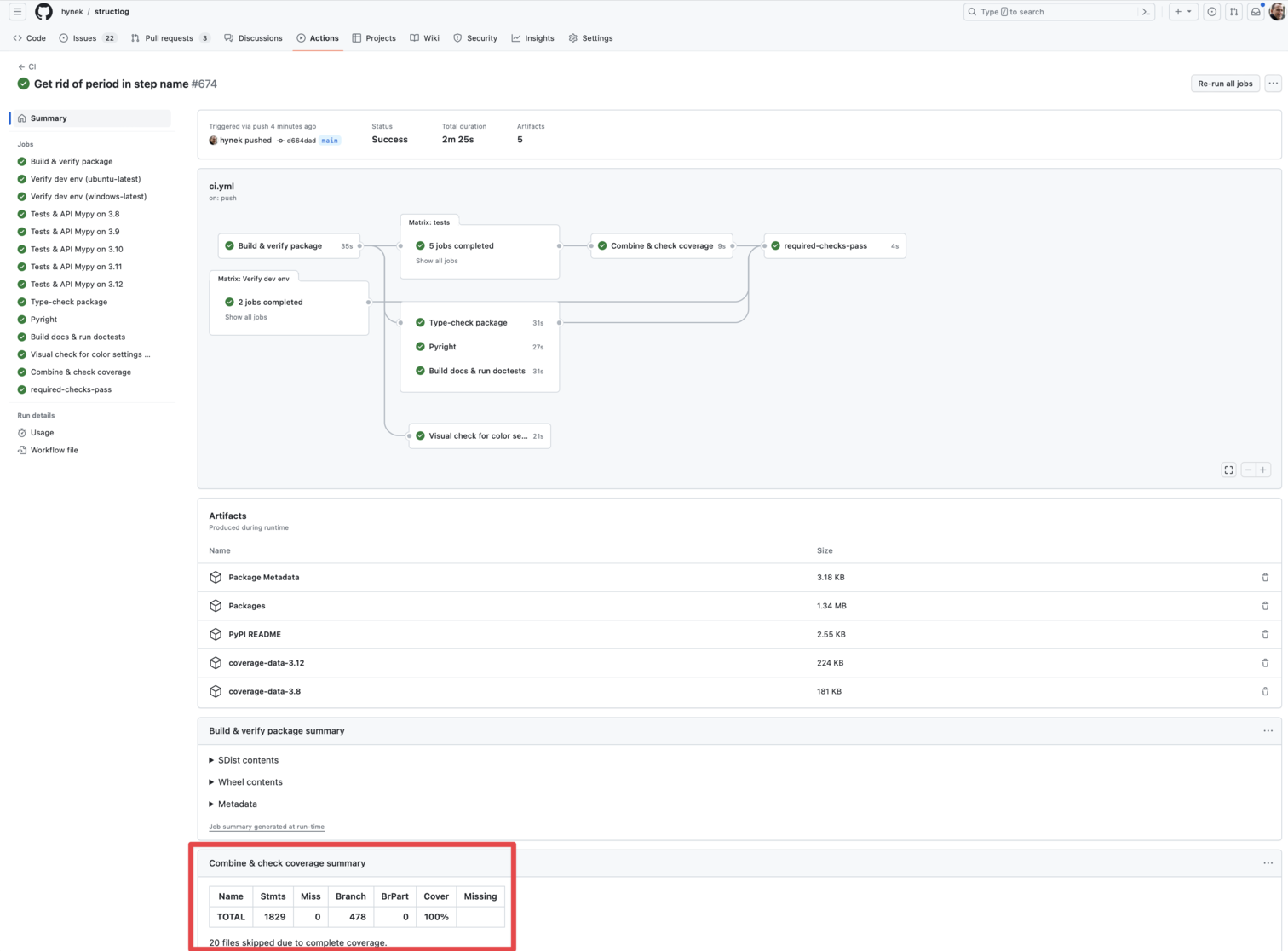

Once the workflow is done, you can see the plain-text report at the bottom of the workflow summary page. If the coverage check failed, there’s also the HTML version for download.

The workflow summary with a plain-text report.

If you’d like a coverage badge, check out Ned’s guide: Making a coverage badge.

Note on uv

You can use uv by simply replacing python -Im pip install by a uv install --system, but given uv’s overall philosophy, it’s nicer to use it in uv tool mode:

- name: Combine coverage & fail if it's <100%.

run: |

uv tool install 'coverage[toml]'

coverage combine

coverage html --skip-covered --skip-empty

# Report and write to summary.

coverage report --format=markdown >> $GITHUB_STEP_SUMMARY

# Report again and fail if under 100%.

coverage report --fail-under=100

Please note that you have to install uv first. For example, using Astral’s official setup-uv or my setup-cached-uv actions.

History

2024-09-16: Added a note on uv.

2024-09-03: Fixed hidden files upload on

actions/upload-artifactv4.4.0.2024-01-02: Adapted to v4 of

actions/upload-artifactandactions/download-artifact.2023-07-24:

coverage report --format=markdownis a thing “now”!2023-04-12: updated with plain-text reporting.